Ok... so I am not writing about the proucts themselves. I shall get to that - Siebel's BI products, their analytic platform, components, the direction going forward, the other stuff - eventually...

But in the meantime, this past week has seen a surge in BI related announcements from Oracle management. This can be attributed to the fact that the Siebel acquisition has cleared shareholder vote (99% of shareholders voted for the merger -

see link to story on eWeek) and now Oracle is starting to spell out, publicly, its strategy for the different products and technologies that it is getting from Siebel. Oracle had made it clear at the time it announced the acquisition itself that Siebel CRM would be "

the centerprice of our CRM strategy going forward... (we) will continue to sell PeopleSoft CRM, Oracle CRM, but Siebel will be the centerpiece.", according to Larry Ellison (

link to story on C|Net).

As Oracle digged deeper into the products and technologies that Siebel had, it emerged that their analytics applications and suite was also an appealing offering.

Read below for what Oracle executives have had to say about this topic:

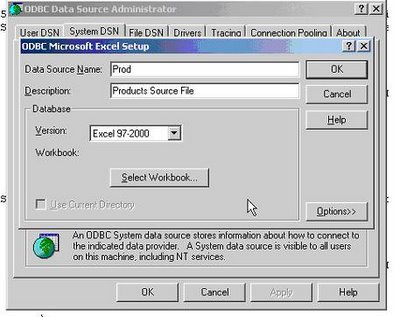

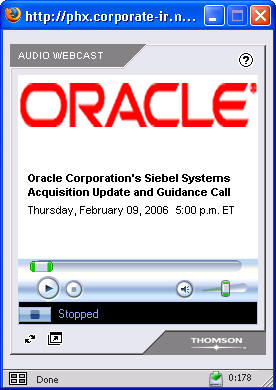

There was an "Oracle's Siebel Systems Acquisition Update and Guidance Call" with analysts on

February 9, 2006 where Oracle Executives discussed acquisition of Siebel Systems (

link to Oracle Investor page) - the webcast is going to be available only through Feb 14, so the while the link here will take you to the Oracle.com investor relations page, the link on

that page that takes you to the webcast may not work a week from now.

(The image at the left shows the audio webcast stream)

Anyway, about 32 minutes or so into the call a CSFB analyst popped the BI question, and wanted to know more about Oracle's intentions regarding Siebel's analytics solutions and the sales force associated with selling the products and applications.

Charles Philips, Oracle's President (

link to the Oracle Executives page) replied to that question. The gist of his reply (and while I did try and type down what was being said, I may not have got all that was said correctly, so please do not hold me to the exact words) was two fold; the first part dealt with the

organization of the Siebel Analytics sales force within Oracle and the second part with the product suite itself.

He (Charles Philips) mentioned that Siebel managed to do a lot of revenue with a small number of analytics reps. They had 31 sales reps and about 30 pre sales reps (their analytics revenues were $100+ million last year). Oracle sales reps obvioulsy also have a lot of experience and skills in selling middleware, databases, ETL tools, and business intelligence, and the direction post-acquisition is going to be to add these Oracle people (with these BI selling skills) along with the Siebel Analytics sales reps and have a combined and expanded sales force.

The second part of his reply mentioned what has already been publicly stated by Oracle executives (including Charles Philips): that the Siebel Business Analytics suite brought to the table a piece of the solution that had been missing in

Oracle's middleware stack - a world class analytic product. And now that Oracle has this product it can go and aggressively sell BI with this. This addition is also one of the most exciting pieces in the technology side that comes to Oracle with this acquisition.

Larry Ellison added to this by saying that close to 25% of Siebel's license revenue came from selling these analytic apps. They (Siebel) were selling it attached to their apps 95% of the time. Oracle would expand this and the analytic apps not only attached to the Siebel (CRM) stack but also with PeopleSoft and Oracle apps.

Larry also stated that a lot of the BI technology comes out of the database (if you look at such BI related database technologies as MVs, partitioning, OLAP, SQL Analytics, Data Mining, etc...). If on the other hand you shave away the database (contribution to BI), and look only at the middleware, and look at Oracle's products vs Siebel products, Siebel have already built the

next generation of metadata driven business intelligence and have the best product out in the market.

The intent is clear - Oracle is very, very serious about business intelligence and that we now also have the kind of complete solution that go and compete and win, every single time. This is an exiciting time to be in BI in Oracle (err... yes, really). You have the product(s), you have the intent, and you also have the executive visibility that can drive people to achieve that much more.

You can also read about Oracle's comments on BI and Siebel in a press report in CRN (

link to CRN page):

"

In addition, Oracle plans to highlight Siebel's analytics know how and will push those tools not only as standalone products but with its middleware, Ellison said, calling those tools a "hidden jewel" of the $5.85 billion Siebel acquisition, which was completed last week.Siebel's analytics technology will "absolutely" be the foundation of Oracle's business intelligence effort going forward, Ellison said. There also will be an analytics "overlay" sales force to assist on sales of business intelligence that will work with applications and middleware sales teams, Ellison said."

Separately, BizIntelligencePipeline (

link to article) had a story where

Gartner estimates that the market for BI will reach $2.5 billion this year.

"

The Stamford, Conn.-based firm further projects ongoing growth through 2009, when license revenue is expected to reach $3 billion. The findings, based on a Gartner survey of 1,400 CIOs, indicate that business intelligence has surpassed security as the top technology priority this year."George Shaheen, CEO when Oracle and Siebel agreed on the deal, had this to say about BI and Siebel (

link to story on SearchCRM.com dated Oct 19 2005)

"That is an absolutely sleeping giant in our portfolio. That's a $15 billion to 18 billion market where there's no one clear market leader. I had very high expectations for the role of business analytics."

The person who ran the analytics show at Siebel is

Larry (yes, another Larry)

Barbetta (who had founded nQuire, an analytics company that Siebel acquired in 2001), and this is what he had to say in the same article on analytics:

"

It's beyond intuitive, it's obvious," said Larry Barbetta, senior vice president and general manager of Siebel business analytics. "That's what the goal needs to be." Oracle's plans for the future of Siebel's Business Analytics application has yet to be determined, as does its interoperability. "We have a stated mission to interact with all systems," Barbetta said. "You want to know about Oracle, there's another Larry [Ellison] you need to talk to.

"You can read more about the Siebel Analytics Platform, its analytic applications, and the components therein at the Siebel web site - (

link to page).

And if this is not all, a day earlier, on Feb 08 2006, Larry Ellison (

link to story on C|Net) stated at the

2006 Credit Suisse Global Software Conference (

link to conference page) that Oracle would be willing to do acquisitions in the BI space to become the #1 player there...

Snippets from the article:

"

Ellison told a Credit Suisse investor conference that the business intelligence and middleware market--which he considered as one--represented an area where Oracle was clearly not the leader and needed to make deals to gain a leading position."We are clearly not No. 1 in middleware," said Ellison, whose remarks were Webcast. "You will see us do a number of things in that space, including acquisitions.

"More details to follow in March - watch this blog.

Addition: Added section on Larry Ellison's announcement at the CSFB conference on its intentions to do BI related acquisitions.